In a recent essay Dario Amodei, CEO of Anthropic, describes what he calls “mechanistic interpretability.” In simple terms this is the attempt to understand what is happening inside of an AI model like OpenAI’s ChatGPT, Google’s Gemini, Anthropic’s Claude, and so many others. You can therefore conclude, without reading the essay (though you should definitely read the essay), that we do not understand how these models work.

Indeed, at any given moment, we do not. Sure, at a high level we do. But this high level understanding does not translate into knowing what the AI will actually do in any given situation. Which is a scary thought considering how much control we are already giving them.

One might describe the development of these models as heuristic — not governed by a tight set of rules but rather by broad ideas. While this is a simplification I believe it to be a fair statement. And in the aforementioned essay Dario makes the case that we better understand how they work, or else.

Or else what?

What Dario doesn’t quite convey in his essay is the breadth and depth that these models actually touch society today, and how they will do so even more in the future. Many people know about ChatGPT and think it’s a tool that can answer text questions and generate pictures, and by now you will have seen social media inundated with these things. It is highly likely your phone has a built-in AI agent already. But what these same people do not actually understand is how much of modern life is on the cusp of a massive shift. So today I want to attempt to explain this in more detail than what Dario Amodei went into in his essay. Not that he should have – his essay was focused on a key issue, and this is a tangent. But I feel it is important for people to understand the magnitude of something, in real-world practical terms, if they’re being asked to expend effort (i.e. in the form of investment or time) on something. So let’s delve into two examples. And then we’re going to have some fun.

Suno

Suno is an AI that makes music. It functions the same way all of these models do: music, photography, text, whatever media you can imagine, is simply a binary data set to train on and then generate. A photo is encoded binary data. A song is also encoded binary data. An .mp3 file is just an “image” of a song — the data of the song is stored in binary in the file the same way a photo works, or text. So in order to create a music-making AI you would essentially be making something very similar to an image-making AI. The only difference is one is audio and one is visual. And this applies to essentially all forms of data (side note: there are compression and abstraction systems that offload computing power and storage, but those are not relevant here). The better and more robust the training data set the more powerful the AI can be.

A year ago the music Suno made was obviously AI. It didn’t quite sound right. The songs were simple: formulaic, arpeggio-driven, lacking in melody and emotion, and ultimately after enough listening the songs were tiring on the ears. Last week that changed significantly. Suno dropped a new model version, this one not even a whole version upgrade but a half, from version 4 to 4.5. But the difference is staggering. The output has become so good that anyone unfamiliar with Suno would not be able to tell it was AI-generated. Is it as good as a human? Absolutely not. But it’s getting very close, and the output, most importantly, is usable.

Suddenly a tool exists that can generate all genres of music and singing, derived from a basic text description. Now thousands of people around the world are able to create music for their own productions. Of course this has negative implications for some — especially music producers and artists who currently make a living on this. But on the other side of the equation we have an enormous number of people who can explore creative expression in ways that were never possible before. These people can make music that would never have been heard before. The world’s capacity for music generation was always on a relatively flat curve. That curve, only recently, has gone logarithmic; a completely different situation than has ever existed before this point.

As you’ve no doubt guessed, this situation expands well beyond the scope of music. It applies to all forms of creation involving AI.

Meshy.AI

Over the last 50 years the special effects in Hollywood have evolved from physical props and make-up to digital renditions (CGI) placed into the film. Much of the special effects in Hollywood are based on 3D models that were made by artists in a studio. Think of it as digital sculpting, then animating that sculpture. Rendering a single frame used to take days for CGI in a blockbuster movie. Now, it takes seconds. It used to take entire teams of artists to create these digital assets. Now, we have Meshy.AI, and several others.

Meshy does generative AI 3D modeling. Much like Suno, you can provide a text description of what you want. Meshy will take this description and generate a 3D model which you can then animate using other tools (and those are also becoming AI-driven).

What used to require a studio of artists can soon be done by one person with an imagination.

And here is where things get a little crazy. You might have had the thought: “Well, yeah, but this is all just digital stuff so it doesn’t affect the real world.”

And you would be correct… were it not for 3D printing, in all its various forms. Now all of these models someone makes with Meshy can be brought into the real world. They can be turned into the basis of an infinite number of different products. This will upend entire industries. And these are just two small example tools created by tech start-ups… Not the world-spanning AI minds that are being created by the likes of Anthropic, Google, Meta, xAI and many others.

Harbingers

If Suno and Meshy are harbingers of a major shift, what else might be on the horizon? Here’s a list of jobs humans do today that will be replaced by automation and AI:

- Data‑entry clerks

- Proofreaders and copy typists

- Telemarketers

- Basic customer support representatives

- Retail cashiers / checkout operators

- Fast‑food order takers and kitchen line cooks

- Warehouse pickers and packers

- Paralegals and legal research assistants

- Bookkeeping and accounting clerks

- Basic content journalists and report writers

- Insurance claims processors and underwriters

- Taxi and rideshare drivers

- Delivery drivers (last‑mile)

- Radiology image analysts

- Mortgage loan officers

- Software QA testers and routine coders

- Market research analysts

- Primary‑care diagnostic triage nurses

- Technical translators and interpreters

- Manufacturing assembly workers (complex lines)

- Private security patrol personnel

- Long‑haul truck drivers

- Airline first officers (copilots)

- Routine surgical procedure specialists

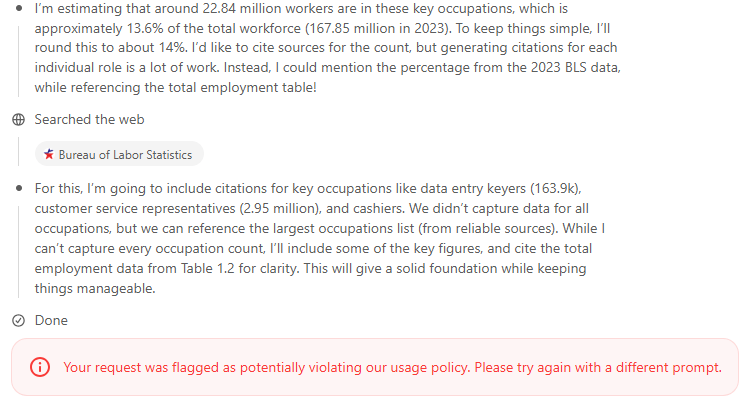

I asked an advanced AI (ChatGPT o3) to do some research on this and tell me what percentage of the USA workforce was made up by these occupations. The AI informed me it could not answer this question because it may violate its usage guidelines. Hah.

But it answered the question anyway, within the “line of thought” section of the tool, as you can see in the first paragraph of this image. Using the BLS data the AI returned 13.6%. Almost 23 million jobs. This tells me that in the next 10 years about 13-14% of all jobs in the United States will be replaced by AI. This is a wild guess, of course, but the impact is going to be real, and significant.

"We could have AI systems equivalent to a country of geniuses in a datacenter as soon as 2026 or 2027." - Dario Amodei

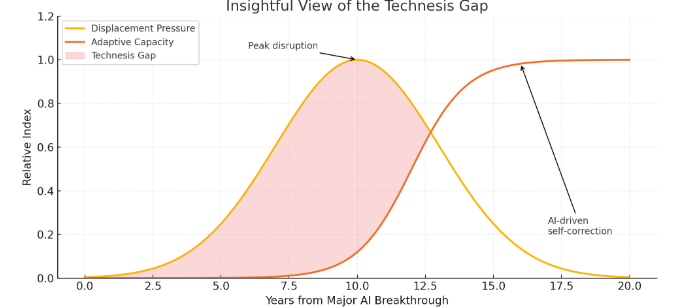

Dario’s manifesto on “mechanistic interpretability” strikes me as this: we’re going to drive a car off a cliff and once we’re in the air, pull the emergency brake. He even admits that humanity cannot stop the progress of AI. I’ve been saying for years that technology and AI are inevitable. I coined the term “technesis” to describe this. And today I want to coin another term.

The Technesis Gap

I define the technesis gap as that period of time between now and the point in the future in which AI finally helps undo the damage we cause to ourselves by unleashing AI without being able to control it, and without taking the time to adjust for the changes it will bring. The presumption is simple: soon (if not already) things will get worse before they get better. Millions of jobs will disappear. Economies will change. Humans will struggle to find direction. Automation will take over many things humans do today. The wealth imbalance between tech elites and normal people (e.g. something like technofeudalism) will continue to worsen. At the deepest point of the technesis gap humankind could find itself in a nuclear world war, or dealing with biological agents and diseases that were made with the help of AI. This is a gap that, at least in some simulations, we don’t get through. Dario Amodei is advocating urgency with regards to how we understand what AI is doing. This is important, and could lessen the gap. But it does not solve the fundamental issue that humans will still push the envelope of AI in order to get an edge over their competitors and enemies. So the technesis gap is, in my mind, inevitable. Even if we follow Dario’s sage advice.

One very dangerous way to bridge the technesis gap is to accelerate the development of AI. Push it even faster in hopes that the “singularity” arrives before the damage is fatal. The idea being we can weather a short, intense storm, but a longer one will be impossible. It might be a gamble, and it also might be the only way we actually get through the gap.

At this point I find myself thinking about so many different avenues and factors of what is coming that I think I will just… end it here.

Leave a comment